Dall-E2 vs Midjorney. Artificial Intelligence changes art landscape

How can artificial intelligence affect the creative process? Until recently, it was hard to believe how much neural networks could change art...

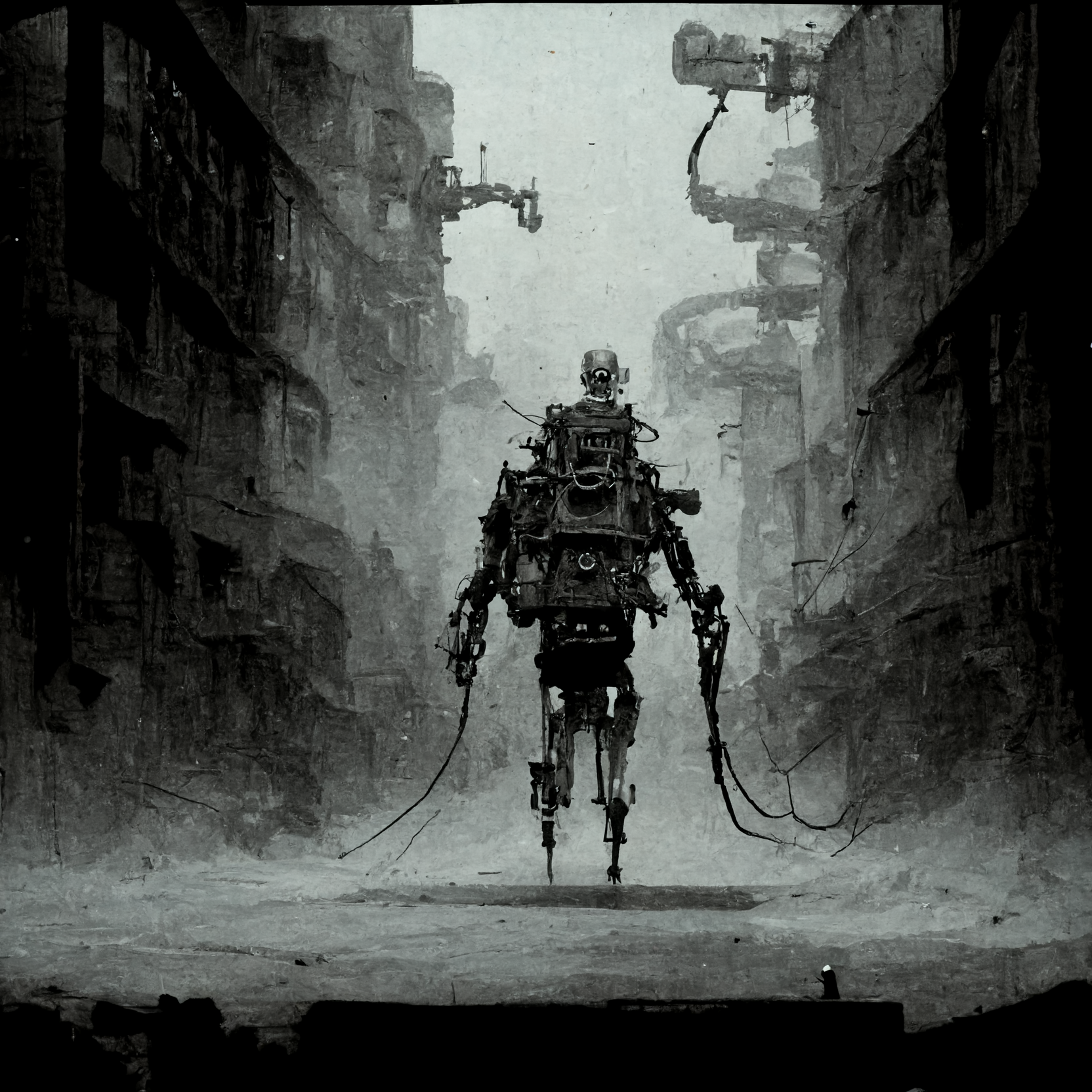

As we know, humans are extremely concerned about the threat of artificial intelligence - there are many incarnations of this primal fear in popular culture, from Terminator to GLaDOS.

But Midjourney and other similar platforms are different. They are capable of creating art, of generating beautiful images from a simple set of words. Or, is it art? And what are we on the next stage of replacing humans with machines? Nah, we don't think so. It seems to us that this curious revolution may also play into the hands of filmmakers. How exactly? Today we'll try to figure it out by comparing the two Midjourney and Dall-E2 platforms. Get ready, it's going to be interesting and insanely beautiful.

Why is it cool?

Both platforms became available in July of this year and have created a furor online. Users began to produce hundreds and thousands of images every day. All because of the simple principle of work: artificial intelligence creates pictures based on natural language. You enter a sentence - you get a picture.

Images generated by Midjourney

Generated by Dall-E2

The simplicity and accessibility excited the community, leading to controversy and fears that AI will soon completely replace designers and artists. However, after using both platforms, we can't be sure that designers, VFX artists, and visual creators will be out of work. We see another option: professionals will be able to use Midjourney and Dall-E2 as a tool for optimization and simple convenience. After all, we have long been used to Photoshop, which at one time also changed the process of photo processing.

So, these programs work quite simply. In the case of Midjourney, everything is prosaic, especially for those who are familiar with the cross-platform messenger Discord. You have two options to choose from: buy paid access in the form of a subscription (prices start at $10), or take your chances and request free access to the open beta, but keep in mind that the platform is now at a high temperature and waiting time may be quite long. Once you gain access to Discord, one way or another, you can try out the power of the neural network by simply describing the image you'd like to get. A couple of seconds and voila, Midjourney will generate four picture variations. Keep in mind that if you do wait for free access, you'll have to work in a general chat room with other users where the flow of requests and pictures never stops. As a consequence, navigation can become a problem.

Dall-E2, on the other hand, is a software developed by OpenAI, access to which is given only after you fill out a request, after which you will appear in a waiting list. No one knows how long you will be there, but cheer up, we hope you will succeed! Personally, we waited a few months, that's for sure. Once you get to the main menu of Dall-E2, you'll see a minimalistic interface of just one line to enter text, and then everything is easy.

So how does it work? Let's compare.

As far as we can tell, Dall-E2 & Midjourney don't just generate images based on thousands and hundreds of thousands of images; these neural networks are trained to distinguish between individual words. For example, Dall-E2 uses CLIP technology, which is responsible for matching words to images. That is, for example, the network recognizes the word "cowboy", "desert" or "horse", and then analyzes the human-understandable aesthetic patterns of Westerns. As a result, it produces an image.

Dall-E2 images

There are a lot of concepts suitable for the combination of these words, and therefore the options will be no less. Another significant technology is DIFFUSION, which finishes the job of forming a picture into a single whole. If CLIP builds up images from the understandable by neural network words, then DIFFUSION, if I may say so, refines the image also based on the analysis of similarities.

Midjourney, in turn, works in a similar way: you set the motif of what you want to see, such as "police, protests, night city, steam," and you get this kind of beauty.

Genereted by Midjourney

We couldn't find the technology behind Midjourney, but you can see with the naked eye how similar the images are. Or aren’t they?

A close comparison shows that there is a gap. Many users have noted that Midjourney seems to pass off imperfection as a beauty: the pictures come out smokier and fuzzy, like analog art. The Dall-E2, on the other hand, does a better job with crisp scenes.

Midjourney images

Generated by Del-E2

We can see how both programs convey contrast very well, but Dall-E2 does so in a tighter way through crisp lines and light contrast. In Midjourney, however, there is also a strong light contrast, but it is bound by the method of drawing, again, if I may say so. We literally have an oil painting in front of us. A couple more images for comparison.

Created by Midjourney

Dall-E2 pics

The funny thing is that because of the way Dall-E2 works (namely CLIP technology), these images look like collages, layering image upon image, which makes the image look raw, like a sketch.

But what prospects does this promise for us, people immersed in the film industry and those who directly produce a movie?

So far, it's hard to make any predictions. The film industry is still very conservative and penetration of the AI technology may take years. We already did this first step with our script breakdown software powered by Artificial Intelligence (you may also be interested in reading this material). That's why we're fascinated by the promise of Dall-E2 & Midjourney. Just imagine how handy it would be to get a scene sketch based on just a couple of words. We're not saying that these images would play the role of storyboarding templates, but you could use them as a reference for further more substantive work with artists, costume designers and fellow cinematographers.

Anyway, it is worth embracing the potential of this technology for revolutionizing VFX workflow. Just look at how well artificial intelligence handles the creation of space and environment. The Internet is already full of examples and tutorials on how to use automatically generated images for fantasy scenes, for example. And it's not the case that a machine has done all the work for a human. Quite the opposite, Midjourney & Dall-E2 can set the atmosphere and tone for a mise-en-scene, and the rest is up to you: you'll have to take material with actors on the green screen with the light set to match the image; also there will be scrupulous work with light, shadows and color in After Effects. In this case, the neural network only reinforces the art of film making.

Nevertheless, it was not without debate. The first example that comes to mind is that of Jason Allen, the board game developer who won 1st prize in the Colorado State Fair Fine Arts Competition. Here's his work.

Everything seemed cool, but the catch was that Allen submitted the work, which he got through Midjourney. The painting, titled "Théâtre D'opéra Spatial", created by artificial intelligence managed to beat out talented artists. Almost immediately, there was outrage on Twitter as to whether Jason Allen should be considered an artist in such a case, or whether he should be stripped of his prize after all. The painting is now on the market for $750.

The other position, which by the way is closest to us, is that AI programs are not capable of creating art, because these images are software-generated, based on familiar concepts of aesthetics. But they have nothing in common with art, because there is no sense. Artificial Intelligence does not yet understand what "meaning" is, which is why the results of its work remain a convenient tool in the hands of talented people who are able to comprehend it and turn it into a creative process.

Generated by Midjourney

Generated by Dall-E2

Afterword

The facts speak for themselves: artificial intelligence now is able to create an image, essentially art with beautiful colors, light and composition. It's incredible! It is unbelievably fun to work with Dall-E2 & Midjourney and you won't even notice how you sit for hours in front of the screen with the desire for more and more images. Even more striking is how much artificial intelligence is increasingly being integrated into various professional fields. Yes, it raises a lot of ethical debate, but we believe that if technology can speed up and optimize the process of creating, for example, a film, why not take advantage of it? Humans are responsible for the creation of all this incredible technology, which means we can and definitely will regulate the integration. That is why it is hard to believe that neural networks will ever be able to replace distinctive creative minds.

From Breakdown to Budget in Clicks

Save time, cut costs, and let Filmustage's AI handle the heavy lifting - all in a single day.